Jess Cartner-Morley

We are living in a period of political anti-intellectualism. But in pop culture, clever is the new cool

At the very moment Trump’s rambling speeches and meme–fied inanity threaten to overwhelm us, fashion, music and film are moving in the opposite direction

Put down your negroni, hang up your Prada handbag and pick up a paperback. Next time someone whips out their phone to take your picture, grab your reading specs, not your lipstick. Smart is the new hot.

Pop stars are launching book clubs – the 1970s had Studio 54, this decade has Dua Lipa’s online literary salon Service95 – or joining Substack, where Charli xcx recently published a 1,800-word essay interrogating why it is that as a pop star “you cannot avoid the fact that some people are simply determined to prove that you are stupid”. The supermodel Kaia Gerber (who is fashion royalty – her mum is Cindy Crawford) passes the time backstage at fashion week reading Didion, Duras and Camus, not Vogue.

Three years ago, we dressed in pink to go to the cinema to watch Barbie; in 2026, the mind-bendingly structured, early-Victorian masterpiece Wuthering Heights is the talk of Hollywood, and Netflix is betting big on Emma Corrin as Elizabeth Bennet in Dolly Alderton’s forthcoming adaptation of Pride and Prejudice. Intellect and glamour – which have always sat at separate tables in the high-school canteen of pop culture (you can’t sit with the cool kids if you are a teacher’s pet, everyone knows that) – are flirting hard.

“This is real,” says trend forecaster Lucie Greene. “There is a backlash against visually focused lifestyle content, which has become so co-opted by brands. Gen Z want more. They want knowledge. They want to go deep down the rabbit hole, on podcasts and on Reddit as well as on TikTok and YouTube.” Meet you behind the bike sheds to discuss Walter Benjamin over a cigarette, babe.

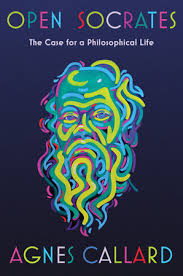

“Reading is so sexy,” Gerber said in 2024 when she launched her book club, Library Science. (First pick: Martyr!, the poet Kaveh Akbar’s anarchic debut novel about grief, martyrdom and addiction – hardly an easy read.) She’s right, obviously, but this is bigger than books. It is thinking, as well as reading, that is cool again. The novel under the arm is just the lapel pin of the brainiac. When the brilliant British designer Louise Trotter was appointed to the Italian luxury label Bottega Veneta last year, top of her in-tray was to cast models for a new advertising campaign. The beauties she chose? Zadie Smith and the octogenarian sculptor and poet Barbara Chase-Riboud.

If we were dumbing this down, I could say that brains are the new boobs. But dumbing down is so over, so let’s dig a little deeper into how we got to a place where Dua Lipa posts selfies on Instagram reclining in a fancy hotel room in full glam and a cocktail dress, winking to the camera over her copy of Just Kids by Patti Smith, and actor Jacob Elordi is papped in an airport bookshop flicking through playwright Suzie Miller’s Prima Facie with a second paperback conspicuously stuffed in a pocket.

It all started with a Kardashian. Of course it did – say what you like about that family, their cultural instincts are rarely wrong. In 2018, Kim Kardashian announced that she was embarking on the long, unglamorous process of qualifying for the California bar. For two decades the Kardashians have anticipated and shaped what aspiration looks like. Kardashian’s pivot towards legal study was an early experiment in whether intellectual seriousness could be folded into celebrity without destroying its commercial appeal.

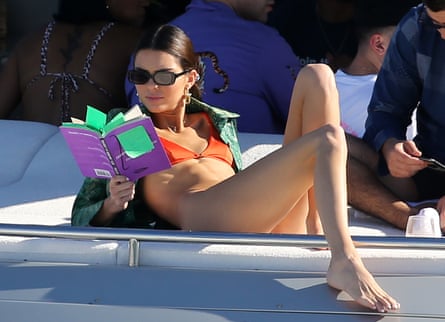

A few years later, reading itself began to creep into the frame. In the summer of 2021, the first season of The White Lotus aired. Its young, beautiful characters were rarely without books by the pool. Sydney Sweeney’s Olivia read Friedrich Nietzsche’s Beyond Good and Evil and Sigmund Freud’s Civilization and Its Discontents, while Brittany O’Grady’s Paula was shown with Frantz Fanon’s The Wretched of the Earth. The books functioned as character shorthand, a way of signalling that these generation Z kids had hinterlands, but was also a visual assertion that thought could be televisual. Around the same time, paparazzi shots of models and actors reading (Kendall Jenner photographed with a paperback on a yacht in 2019; Emily Ratajkowski reading Joan Didion in bed in 2020) became minor viral events, with the text in their hands scrutinised as closely as their clothes.

Ratajkowski’s visibility in this space presented a particular challenge to the status quo, because she is not just pretty, she is hot. And here she was, publishing essays, giving interviews about feminism and power, and, in 2021, releasing My Body, a bestselling collection that insisted that a woman could be a serious writer without backing down on the day job of being a sex symbol. There were echoes of this mind-bend in 2025 when the avant-garde pop star FKA twigs, once notorious for her athletic pole‑dancing skills, delivered a keynote address at the British Library, explicitly calling out what she described as the “dumbing down” of public life. “Where are the thinkers?” she asked, demanding intellect as a civic right, a utility, a public service.

This is a very strange moment for smart to get hot. We are living through a period of pronounced anti‑intellectualism. Expertise is dismissed as elitism, procedure as dull, facts as irrelevant. Trump’s rambling, repetitive speeches have warped public discourse worldwide. The political motivations are clear, since anti-intellectualism has always been foundational to authoritarianism, depriving the people of the framework with which to question power (no need to remind anyone who burned the books). In the US, elite universities are being defunded, investigative newspapers such as the Washington Post depleted and defanged. Everywhere, we are being dumbed down for profit by an addictive social media, which turns us all into remote-working battery chickens of the attention farms of Silicon Valley. We feel powerless in the face of this, and our acceptance of it is insidious: in the modern phrase, “It’s not that deep”, and in the casual they’re-all-as-bad-as-each-other disengagement that is undermining democracy.

And yet, at the very moment meme-fied inanity threatens to overwhelm us, some threads of popular culture are moving in the opposite direction. The contrasts can be jarring. Last September, during the week that Trump called climate change “the greatest con job” during a speech to the UN, the New York catwalk shows were taking place. At Proenza Schouler, the show notes came footnoted with a reading list of French feminist writings, including The Third Body by Hélène Cixous and Speculum of the Other Woman by Luce Irigaray, while Joseph Altuzarra left a copy of The Memory Police by Yōko Ogawa on every seat. One day in January, I read a news report about Trump confusing Greenland with Iceland four times in one speech, and then toggled to Vogue to read about the latest Saint Laurent menswear show, which was inspired by designer Anthony Vaccarello’s reading of James Baldwin’s seminal 1956 novel Giovanni’s Room.

Did you raise an eyebrow at the back there? It is worth interrogating the scepticism that creeps in any time fashion or glamour are put in the same category as thought or intellect. I refer you here to Dua Lipa’s author interviews, which are superb. In a chat with David Szalay, the author of Flesh, she asked him about the authorial decision to omit the protagonist’s father from a story that has so much to say about masculinity – a point that, Szalay notes, no reviewers had picked up on.

Rock’n’roll may have long set itself in opposition to being square, but the history of pop lyrics debunks the idea that pop stars are stupid. “Celebrities get called stupid if they don’t read, and stupid when they do,” says Hali Brown, the 30-year-old co-founder of BookTok’s @booksonthebedside. “So I don’t really know what people want them to do.” She has no time for the pearl‑clutching over “performative reading”. “Young people are definitely not divested from celebrity culture. If this makes people feel better about reading out and about, that’s good, right?” As Greene points out, “Creatives have always had a close and thoughtful sense of the zeitgeist. Eloquence is part of being a cultural leader.”

It’s complicated. Generation Z are, as Greene puts it, “totally paradoxical, just generally. Worried they spend too much time online, yet spending a huge amount of time online. Worried about the environment, yet Shein’s biggest audience.” It is certainly not as simple as people reading more. The cultural heat runs counter to the general direction of travel. Long-term studies show sustained declines in reading across much of the anglophone world. In the UK, a survey by the charity the Reading Agency found that leisure reading among adults has fallen steadily over the past decade; in the US, the National Endowment for the Arts has reported a sharp drop in literary reading since the early 2000s; in Australia, similar trends show fewer adults reading books regularly, particularly men.

Faced with this decline, the book industry is using fashion as a lever with which to exert counter-pressure. James Daunt, the managing director of Waterstones, Britain’s largest bookstore chain, has spoken about the growth of print book sales among younger readers. Literary fiction and classic novels, in particular, are seeing renewed interest from under-35s. Booksellers report that many customers arrive already primed by social media. They know the covers of novels they have seen referenced online, even if they are not yet sure what they are about. As one London-based bookseller says: “Covers matter enormously, but so does the idea that reading is part of who you are, not just something you do alone.”

And so reading is more visible than it has been in years. You have already seen the books. The blue-and-white covers of Fitzcarraldo Editions peeking out of tote bags. Dog-eared copies of Wuthering Heights on the train, sudden ubiquity lending it the air of a seasonal accessory. Book clubs have proliferated, hosted in bars or online spaces, with discussion as much about identity and feeling as it is about plot. Alongside this sits BookTok, the sprawling corner of TikTok where users, many of them in their teens and early 20s, recommend novels, cry on camera about fictional deaths, annotate favourite passages and turn backlist titles into surprise bestsellers. In a culture saturated with surfaces, the book becomes proof, if not of depth, then at least of the desire for it.

Across the UK, booksellers describe younger customers who are less interested in genres than in signals: what a book says about them, how it looks on a table, how it fits into a life. At BookBar, the London hybrid bookshop and wine bar (with one branch in Islington and another in Chelsea), the founder, Chrissy Ryan, has spoken about how reading has become social again – performative, or just communal, depending on how you look at it. Events sell out quickly. “People want to talk about what they’re reading,” notes Ryan. “They want it to be part of their social life.”

In an age of short attention spans, “not being a slave to your phone is kind of seen as a flex”, says Brown. Recently, she stopped using Spotify and has started listening to the iPod she had when she was 10. “People are realising that their best ideas come from times where they’re engaging with a world beyond short-form content.”

The publishing industry has pounced on an opportunity, inviting figures from outside its traditional gatekeeping structures into positions of authority. Sarah Jessica Parker recently served as a judge for the Booker prize, her presence aiming to signal not dilution but reach. Pandora Sykes is the author of Books and Bits, the most popular UK books newsletter on Substack, with more than 100,000 readers. Before she was a literary tastemaker, she was a front row fixture as a newspaper fashion editor. “There really didn’t seem to be a credible way in which I could be attending fashion shows and being photographed in some zany get-up, while also writing book reviews or interviewing authors,” she says. “I had to pick my poison, and it really did feel that binary: it took ages for people to take me seriously in the culture space, so I really limited the fashion side.” But Sykes’ eye for the zeitgeist and sense of style are compelling for readers for whom a love of books sits alongside less highbrow interests. “Most of us aren’t exclusively readers, or fashion plates. And I don’t think it’s something we apply to men, the idea that everyone who likes books is austere and highfalutin and couldn’t possibly enjoy the lowbrow, or the ornamental. She can, she does, she will again!”

Note the character arc of the book bag. What began with the quietly earnest Daunt Books tote and the New Yorker canvas bag has become a high-fashion money spinner. This season’s must-have Dior handbags are emblazoned not with logos but with titles: Les Liaisons Dangereuses, Madame Bovary, Les Fleurs du Mal. Literature is lifestyle, knowledge is brand. There is something undoubtedly troubling about this pricing of intellectual aspiration – particularly, in the case of the Dior bag, at around £2,400 – but it would be naive to ignore that books have always been aesthetic objects. Home libraries were status symbols long before Instagram; the orange spine of Penguin classics is as recognisable as any designer monogram. The book cover as design object is nothing new: Francis Cugat’s 1925 illustration for the first edition of The Great Gatsby, spectral eyes over blazing cityscape, a fever dream of hedonism and melancholy, is a design classic. In 2024, a signed first edition of it sold for $425,000 at Heritage Auctions, according to the Artnet Price Database.

Any attempt to reduce this to books versus phones will gain you a B-minus at best. Genuine skill and knowledge are also becoming more prized “in online content and within commerce”, says Greene. “Look at the emphasis on athletes, footballers, hockey players and pro skaters as the new fashion and luxury influencers and partners. Culture is looking to people with genuine skill.”

Instagram, once the engine of aspiration, is losing ground to Substack, where depth, voice and argument are given a modern spin, with thinking monetised as expertise plus personality. Podcasts, too, bring long‑form thinking into culture’s fluffier regions. Bella Freud’s long-running podcast, Fashion Neurosis, treats fashion as a route into biography, memory and theory, with recent interviewees including Debbie Harry, Christy Turlington and Annie Leibovitz.

But is smart really the new hot, or is smart only the new hot if you are also hot? Are we just turning this into yet another aspect of life where only pretty people count? As so often, the patriarchy has a lot to answer for here. Intelligence is newsworthy in attractive women, because brains and beauty have for so long been cast as opposing traits. The clever woman was frumpy, unfeminine, difficult. The beauty‑brains binary, which served the patriarchy well, has been internalised. If there is something slightly irritating about watching the already glamorous add intellectual credibility to their portfolios – and we may as well own it – there is also something quietly radical in the refusal to dim one quality in order to legitimise another. The age‑old demand that women choose one identity or the other is being challenged. UK publishers, notably, report that the most engaged reading communities skew young and female. Reading groups, live events and book clubs are dominated by women in their 20s and 30s. It’s interesting that the two major book-to-screen adaptations of this year – Wuthering Heights and Pride and Prejudice – are of novels beloved by female readers.

Where is politics in all this? It used to be the way for young people to engage with the world of ideas. They gathered to talk about revolution, not 19th-century fiction. But with politics now a war zone, who can blame the youth for tapping out? With an assertive strain of modern conservatism consolidating power in the US, and overt political expression becoming more risky, education offers a way of remaining intellectually active without stepping into the blood and gore of the culture‑war colosseum. In a fractious and even dangerous world, reading, quoting and learning are ways to signal seriousness and curiosity without inviting backlash. As political discourse grows coarser, culture finds new places to store complexity.

“Some of this is about expanding what it means to be smart,” says Brown. “There are lots of people who think Trump is smart because he’s a businessman, or that manosphere podcasters are smart because they use scientific words and for some reason always speak really fast. But intellect is more niche. This is about people who want to reflect on the world not just because they want to conquer it or make money. The online reading community is a place where we can talk about things like the patriarchy in an accessible way, through the lens of stories and characters, and I think that can feel healthier.”

With humanities degrees under threat, education takes on the texture of luxury. On TikTok, being “disgustingly educated” is, for want of a better word, the hot new aesthetic. And AI, as ever, is part of the picture. For centuries, we have exercised our brains in our jobs, but if the robots are coming for the jobs, perhaps it is logical that we reclaim our brains for our own use. “And it’s also about trying to make sense of the crazy world we’re living in,” says Greene. “A bit like how Victorians were obsessed with ancient history to make sense of the unprecedented cultural, economic and societal change they were going through.”

Being smart is sexy now. Not only the kind of sexy that makes people fancy you, but the kind of sexy that generates discourse. The kind of sexy that accrues value. The flipside of a dumbed-down world is that thought has scarcity value, and what is rare has always been hyped. Knowing stuff is to the 2020s what limited-edition trainers were to the 2000s. Does that make sense? Whatever. It sounds cool, and that’s what counts.